Will tomorrow’s AI be dumbed down by today’s networks?

There is a lot of talk about AI (Artificial Intelligence) and the impact it is likely to have, both good and bad, on our society. AI looks set to affect everything from healthcare to politics and from education to commerce. Of course, all this AI is going to demand more intensive computing resources, but a question that isn’t being asked is what sort of network infrastructure will AI require?

Let’s step back a moment and look at the limitations of naturally evolved human intelligence, or even that of other animals. Our intelligence is restricted by the size of the neural networks in our brains, which, impressive though they are, limit our ability to process and store information. One of the cornerstones of AI is that it can take advantage of modern computing infrastructure, meshed together in huge data centers, to provide virtually unlimited storage and processing capability.

But there’s a second aspect to human intelligence that is equally important, which is our ability to receive and process new information from the environment around us. Our brain is constantly being fed with huge amounts of diverse sensory information, so it can adjust to changes in our situation. In computing terms this would be called I/O (Input/Output) capacity.

There’s every reason to suppose that AI will also need to scale in both of these dimensions. Many AI use-cases that are cited have a distinct learning function, which might be the assimilation of language, correlation of medical symptoms and causes, or observing patterns in financial markets. This ‘process and storage’ task is not particularly time-sensitive. The compute and storage resources in the data center or AI-cloud need to be networked together in order to scale, but the I/O capacity isn’t likely to be stressed.

Then there will be a real-time aspect that the AI needs to adapt to. In healthcare that might be the sampling of virology reports or patients’ symptoms and locations from around the world, enabling it to hypothesize about, identify and track a future pandemic. An AI analysis of seismic activity could use real-time measurements to alert an AI model to predict earthquakes. An AI enabled customer service system could be made aware of IoT data to predict issues and alert consumers before they have happened.

But all of this is predicated on us having sufficient network capacity to keep pace with the core computing power that will be the AI brain. And it isn’t the networking capacity within the data center, or even between data centers, that matters. It is the network capacity out to individuals and devices at the edge of the network. That means mobile networks and, for more intensive AI use-cases, broadband networks. We need the capacity at the network edge to keep pace with the computing power in the core.

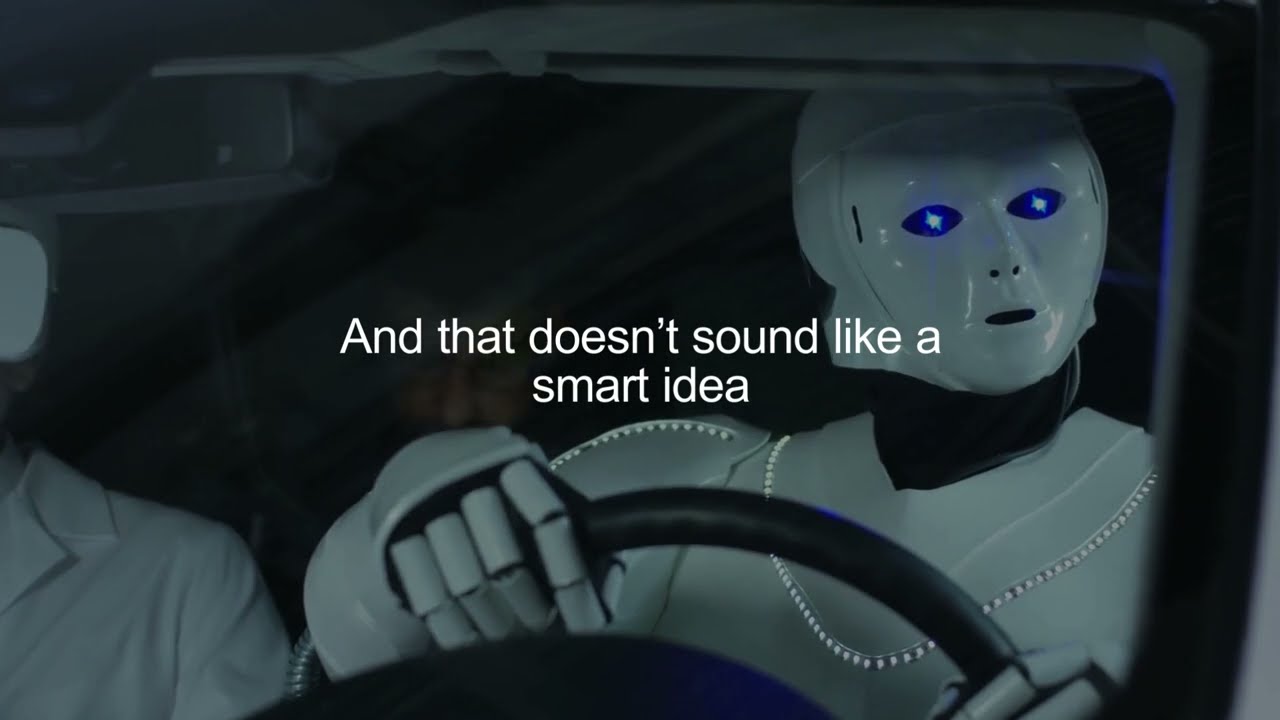

Many experts are warning against the dangers of AI, just as others are promoting its potential for positive change. But either way, if we don’t have sufficient network capacity to feed it, we will just have a big-brain that’s being kept in the dark, which certainly doesn’t sound like a smart idea.